Projects

Shotoclock.io: COVID-19 Vaccine Appointment Availability Notifier

SMS/email/twitter notifications based on zipcode/radius for appointments scraped from multiple sources. Built with Tony Peng.

View Project

N Chainz: A High Performance Decentralized Cryptocurrency Exchange

Winner of Binance Decentralized Exchange Competition $60k prize

Centralized exchanges rely on trusting that their owners will take the proper security precautions. N Chainz is a decentralized cryptocurrency exchange. Specifically, N Chainz’s features include block generation, limit orders, and the ability to trade a base token with another token. We use a novel multi-chain consensus structure to increase performance and scalability. Built with Nicholas Egan and Lizzie Wei.

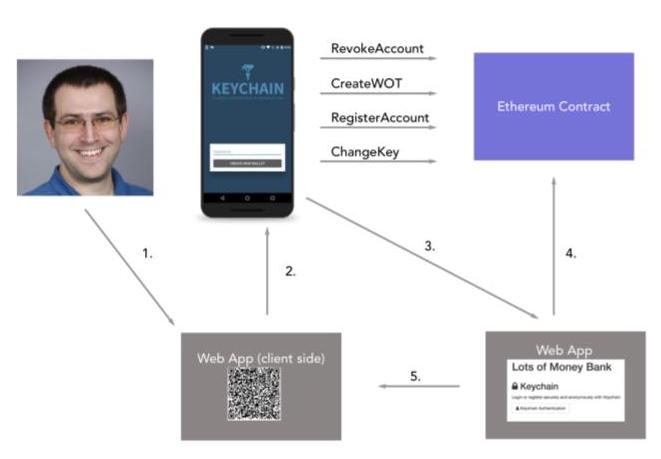

KeyChain: Distributed Authentication on the Ethereum Blockchain

KeyChain is a trustless authentication system, which stores username to public-key mappings on the Ethereum blockchain. KeyChain makes asymmetric cryptography usable for normal users by providing a "web of trust" recovery system where users can recover lost or compromised private keys without a third-party. Built with Sarah Wooders and Michael Shumikhin.

View Project

FashionModel: Mapping Images of Clothes to an Embedding Space

Created a dataset of 373,521 images and trained a captioning neural network to allow for fine-tuned captioning of clothing images (performing substantially better than existing models). We then created methods to take either an image or a caption and produce an embedding vector, which allowed for more accurate nearby searches as well as manipulations. Built with Sarah Wooders.

View Project

Open Mic+ (4,000,000+ Downloads)

Built in high school. Uses speech recognition to listen for "Okay Google" in the background and launch Google Now. Launched months before Google added this feature themselves.

View Project

Commandr (1,500,000+ Downloads)

Built in high school. Uses an accessibility service to intercept commands to Google Now and run custom commands.

View Project

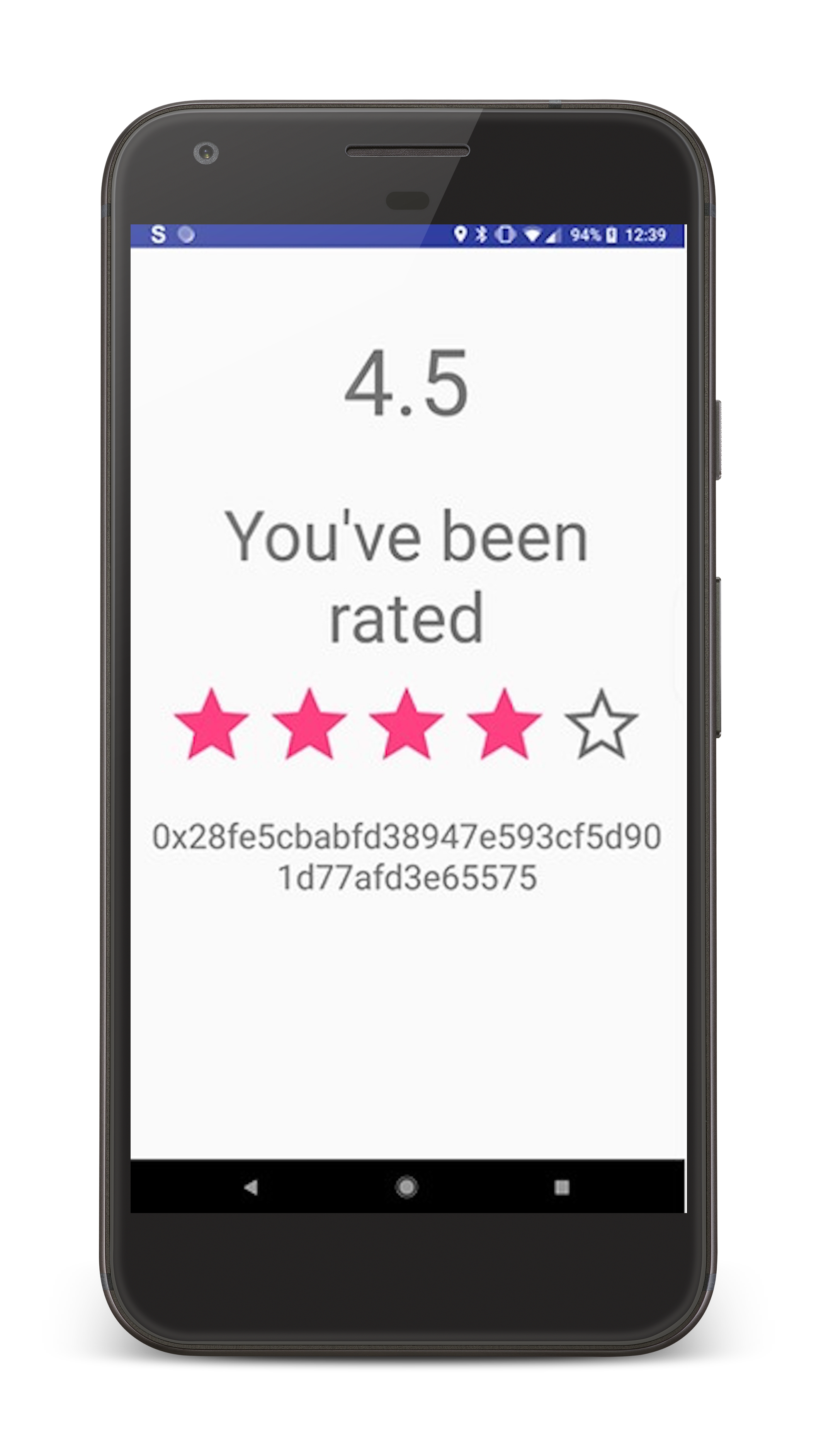

RepNet: A Distributed Reputation Network Built on the Blockchain

As show in the the Black Mirror episode “Nosedive”, online reputation systems have the potential to be extremely powerful and dangerous. We believe that online reputation systems are inevitable. However we believe that if such a system is ultimately going to exist, it should be decentralized, rather than being controlled by private corporations or governments. We wanted to see how such a system would look like and operate, so we developed a distributed reputation app using Android, Ethereum blockchain, and the Eigentrust algorithm. Built with Sarah Wooders and Michael Shumikhin

View Project

SocialEyes AR: Facial Recognition and Eulerian Video Magnification for Heart Rate Detection in AR

Facebook Global Hackathon Finalist and Top 8, Best Facebook Hack YHacks at Yale University

Once you've talked to a person for a certain amount of time, SocialEyes recognizes and stores their face on the Android app. It groups those faces by person and you then have the ability to connect their face to their Facebook account. From then on, whenever you encounter that person, SocialEyes tracks and recognizes the face and brings up his or her information in a HUD environment. SocialEyes can even tell you the persons heart rate as you are talking to them. Built with Logan Engstrom, Michael Shumikhin, and Logan Taylor.

Metacast: Android as an Augmented Reality Hologram

2nd Place in Crowd Vote and Best AR Hack TreeHacks at Stanford University

We hacked Android to access the screen, live broadcast it, as well as control the touchscreen via code! We used firebase as a realtime update mechanism for storing our data, which proved to be challenging as Unity does not have a library for firebase. We hacked together our own Unity firebase library in order to turn the vision into reality. Using certain linux commands, we were able to output the screen's buffer into a png file, which we read back, resized, and uploaded to firebase as a base 64 String. We relayed touch coordinates that simulated taps on the touchscreen via linux commands that simulate touchscreen input. Built with Mohammad Adib and Andrew Nguyen.

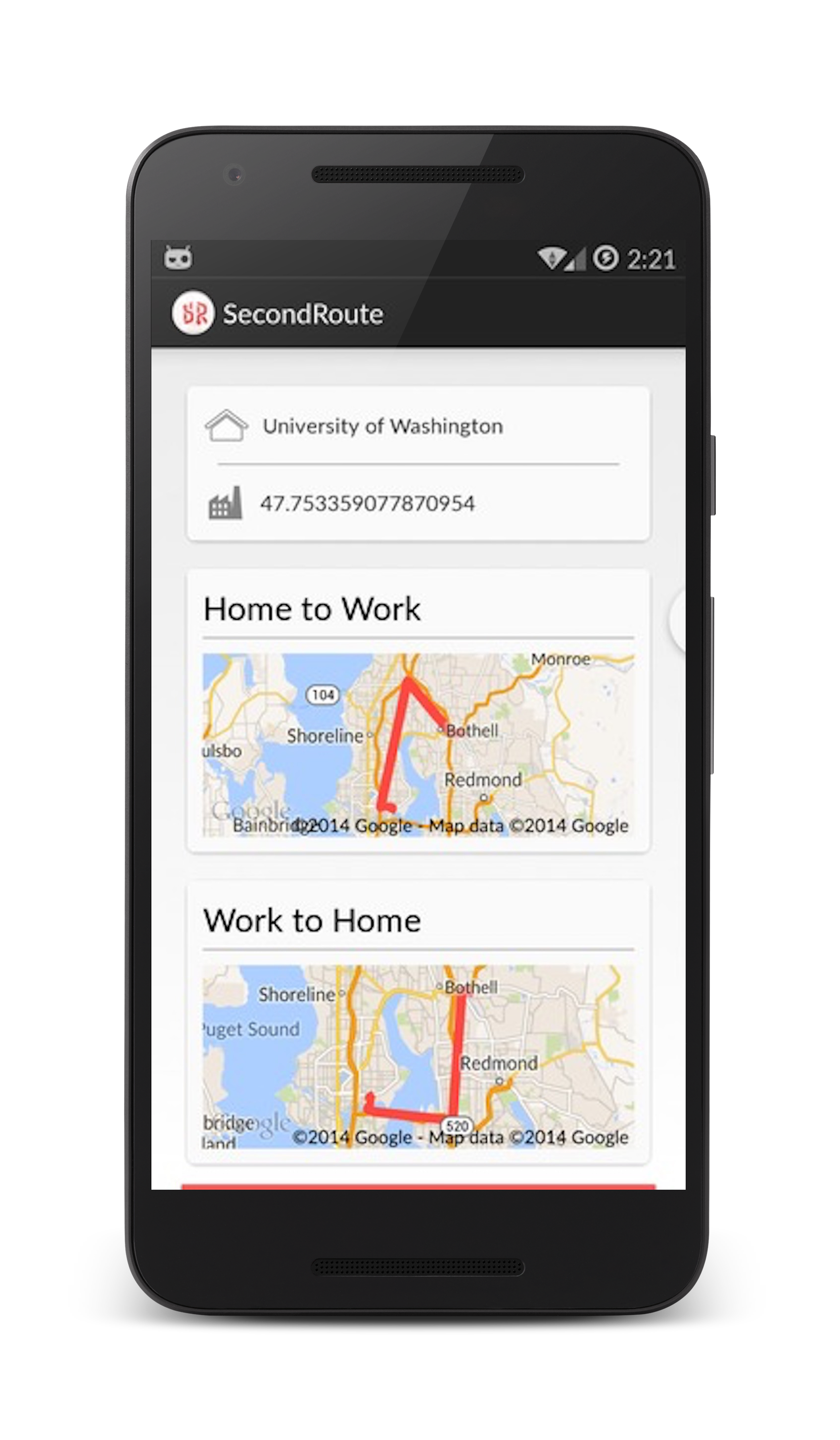

SecondRoute Traffic-Rerouting: Automatic Alerts for Alternative Routes

2nd Place and Best Microsoft Hack DubHacks at University of Washington

Problem: GPS's are super useful even when you know how to get from point A to point B because they will route you away from traffic. But it is a pain to have to start the gps every time you get in the car. It also drains your battery and forces you to listen to directions you already know.

Solution: Use gps, wifi, and accelerometer data to detect when you are driving. Then perform analysis on the possible routes (using Bing Maps API) to determine if you are attempting to drive home and if you are taking the optimal route. Once it detects you are driving it will periodically check if your preferred route is still the fastest. If there is a better route it will let you know and ask if you wish to start navigation. Built with Joseph Zhong.